Docker Sandboxes Tutorial and Cheatsheet

Docker Sandboxes lets AI coding agents like Claude Code run safely in isolated containers. Get full autonomy without compromising your localhost security. Docker Desktop 4.50+

Docker Sandboxes lets AI coding agents like Claude Code run safely in isolated containers. Get full autonomy without compromising your localhost security. Docker Desktop 4.50+

Ever seen "Compacting our conversation so we can keep chatting..." in Claude? It's not a bug—it's a feature that lets you have 100k+ token conversations without losing context. Here's how to leverage it for complex Docker and AI projects. 🧵

A comprehensive deep dive into GPU orchestration in Kubernetes — from device plugins and the GPU Operator to advanced sharing strategies like MIG, MPS, and time-slicing. Learn how to schedule, monitor, and optimize GPU workloads for AI/ML at scale.

Robotics is at an inflection point. We're witnessing a fundamental shift from single-purpose, fixed-function robots to generalist machines that can adapt, reason, and perform diverse tasks across unpredictable environments. This transformation demands something unprecedented: the ability to run massive generative AI models—large language models (LLMs), vision language

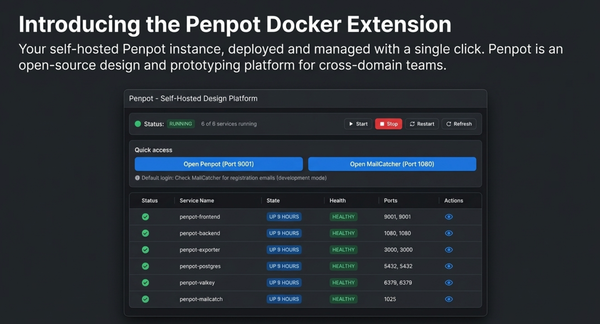

I am thrilled to share the release of the Penpot Docker Extension, a tool designed to streamline the deployment and management of a complete self-hosted Penpot instance directly within Docker Desktop.

Quantization = compressing a model by lowering the precision of numbers, making it smaller, faster, and cheaper to run, often with only a small drop in accuracy.

From Claude Desktop to Cursor: A complete breakdown of which AI chat interfaces support MCP—and which ones are worth your time. Because in 2025, your AI assistant should do more than just talk.

No monitor? No problem. Learn how a simple USB Type-C charging cable and serial console access saved a student demo at our Docker meetup.

What if your AI chatbot could configure itself based on what customers ask, without developers editing config files? That's Dynamic MCP.

Want to add vision capabilities to your applications without sending data to external APIs? Docker Model Runner makes it straightforward to run multimodal AI models locally, giving you complete control over your data while using the familiar OpenAI-compatible API format.

Docker

This guide walks you through connecting models from the Docker AI Model Catalog to MCP servers, enabling your applications to leverage both local inference and external capabilities in a secure, reproducible Docker Compose environment.

Stop wasting hours setting up MCP servers. The Docker MCP Catalog provides 270+ enterprise-grade, containerized Model Context Protocol servers that install in seconds—no dependency hell, no environment conflicts, no cross-platform issues.