Ghost SDLC

Ghost SDLC is software delivered without a human accountability chain. Agents produce, metrics look great, PRs get merged, dashboards stay green. But when something breaks at 2am, the answer to "who owns this?" is a ghost.

I was reading about Citrin Research's "2028 Global Intelligence Crisis" report this morning. It's the one that briefly rattled tech stocks last week. The term that stuck with me wasn't the recession scenario. It was "Ghost GDP".

Ghost GDP is economic output that shows up in headline numbers but never circulates. AI replaced the workers who would have earned and spent that money. GDP looks fine. Most people feel nothing.

I put the paper down and thought: we're building the exact same thing in software.

Ghost SDLC is software delivered without a human accountability chain. Agents produce, metrics look great, PRs get merged, dashboards stay green. But when something breaks at 2am, when a regulator asks why a decision was made, when a customer's data is compromised, the answer to "who owns this?" is a ghost.

Here's where I'd push back on the obvious counterargument, because I've heard it already: "Agents understand code better than humans. Just ask the agent."

True. Give Claude a 10,000-line codebase and it'll explain it, trace bugs, suggest fixes, faster and more accurately than most engineers. Agents absolutely can understand code. That's not the problem.

The problem is that agents cannot be held accountable. Not legally. Not professionally. Not ethically. When a human makes an architectural decision, their name is in Git. They were in the meeting. They can be asked, and they can be responsible. When an agent makes the same decision, that chain doesn't exist unless you deliberately build it in.

Most teams in 2026 are not building it in.

The practical test is simple. Pick any feature your agents shipped in the last 60 days. Now ask: if this causes a data breach tomorrow, who is the named human accountable for the decisions inside it? Can they articulate why those decisions were made? Do they have a paper trail?

If the answer is "we'd ask the agent to investigate", that's Ghost SDLC.

The thing that makes this different from normal ownership ambiguity is the metrics. Traditional accountability gaps surface as friction. Engineers say they're nervous to touch a codebase they don't understand. With agentic AI, that friction disappears. Just ask the agent to modify it. Problem solved, until you need a human judgment call: a regulatory exception, an ethical tradeoff, a decision that requires someone to actually sign their name.

And nobody has the context, because nobody built the accountability chain.

Not all agents create Ghost SDLC. There's a meaningful difference in how they're built.

A bad agent ships code and disappears. No log of what it decided, no rationale, no named human who reviewed the output before it hit production. It optimises for task completion. Accountability is someone else's problem.

A good agent leaves a trail. Every tool call logged. Every architectural choice explained in plain language before the PR is opened. It surfaces the decision to a human and waits for sign-off rather than assuming approval. It's slower. That's the point.

The difference isn't intelligence. Both agents can write excellent code. The difference is whether accountability was designed in or left out entirely.

The fix isn't to stop using agents. It's to build accountability infrastructure into your agentic SDLC from day one. Named owners on every agent-generated module, decision logs that capture why not just what, and explicit sign-off workflows before anything hits production.

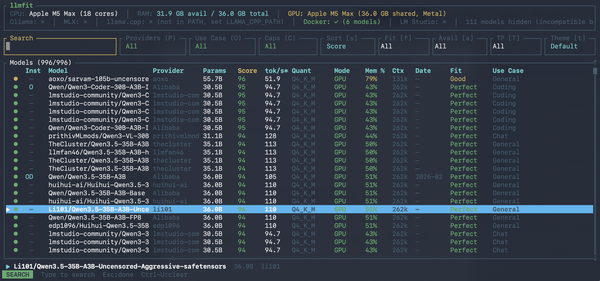

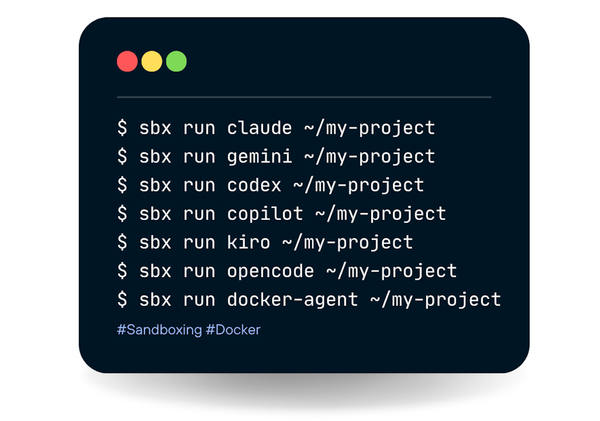

Tools like Docker MCP Gateway help here. When every tool call your agent makes is logged and inspectable, you at least have the raw material to reconstruct a decision trail. But tooling alone doesn't create accountability. That requires deliberate process.

Ghost GDP produces more output with fewer people benefiting. Ghost SDLC produces more features with fewer humans willing to put their name on them.

Don't build Ghost SDLC.