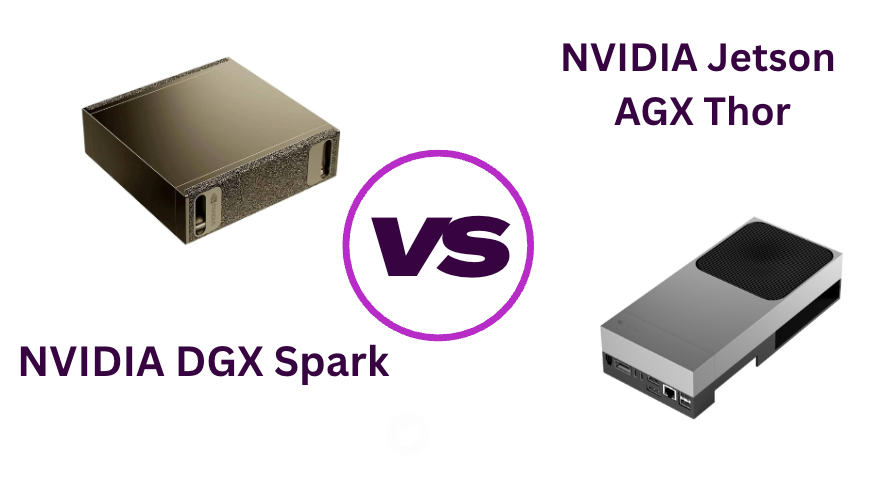

NVIDIA DGX Spark vs Jetson AGX Thor: Which one should you buy?

Two NVIDIA Blackwell machines. Both fit on your desk. Prices within a few hundred dollars of each other. And yet — buying the wrong one is a $3,500 mistake you'll feel every day.

I've spent the last few months watching both machines get benchmarked, stress-tested, and argued over on forums. The confusion is understandable. NVIDIA, in its infinite wisdom, released two compact Blackwell devices within months of each other without making it obvious which one you should actually want. This post is my attempt to cut through the marketing fog.

Short version: these are not competitors. They solve completely different problems. The confusion comes from the fact that they look similar on a spec sheet and cost roughly the same. Once you understand what each machine was actually built to do, the decision becomes obvious.

What is the DGX Spark, actually?

The NVIDIA DGX Spark GB10 is what NVIDIA calls "the world's smallest AI supercomputer." Originally announced at CES 2025 as Project DIGITS, it finally shipped in October 2025 — after a delay that pushed it from the original May window. It's powered by the GB10 Grace Blackwell Superchip, which pairs a 20-core Arm CPU (Grace architecture) with a Blackwell GPU in a unified package.

The headline number: 1 petaFLOP of FP4 AI compute, 128 GB of unified LPDDR5x memory, and up to 4 TB of internal storage. It runs full Ubuntu 22.04 with NVIDIA's entire AI software stack pre-installed — CUDA, cuDNN, NIM, the works. The idea is that a developer, researcher, or AI team can run models with up to 200 billion parameters locally, no cloud required.

NVIDIA is explicit: the DGX Spark is meant to be a developer's companion, not a replacement for their workstation. You write code on your Mac or Windows PC, and the Spark handles the heavy inference and fine-tuning workloads beside it. You can also connect two Sparks together via ConnectX-7 networking to tackle 405B parameter models.

In one sentence

DGX Spark = a desktop AI lab for people who build and fine-tune models

Think: AI developer, ML researcher, enterprise team that wants to keep sensitive data on-prem. This is a machine for LLM inference, fine-tuning, and generative AI workloads.

What is the Jetson AGX Thor, actually?

The NVIDIA Jetson AGX Thor T5000 is a completely different beast wearing similar clothes. It's built around the Jetson T5000 module — a platform designed from the ground up for physical AI: robotics, autonomous vehicles, and real-time edge systems. The developer kit includes the actual Jetson module that would ship embedded inside a real robot.

On paper, its specs are staggering. The T5000 hits 2,070 TFLOPS at FP4 — more than double the DGX Spark's peak. It includes a third-generation Programmable Vision Accelerator (PVA), an optical flow accelerator, and Multi-Instance GPU (MIG) support that lets you slice the GPU into up to 7 independent partitions. That last feature is a big deal for AI pipelines that need to run multiple models simultaneously without expensive context switching.

It runs JetPack — NVIDIA's robotics-oriented OS — rather than a standard desktop Ubuntu. It ships with a 240W power adapter and a 100 GbE port for clustering multiple units together. The storage tops out at 1 TB, and the USB expansion is 5 Gbps (versus 10 Gbps on the Spark).

In one sentence

Jetson AGX Thor = the brain of a robot or autonomous edge system

Think: robotics engineer, physical AI platform developer, autonomous vehicle team, or anyone building systems that need to perceive and act in the real world at low latency.

⚠ One important note on availability: NVIDIA has said the Jetson AGX Thor Developer Kit is a limited release. When units sell out, that's it — NVIDIA expects customers to switch to buying the T5000 modules directly for production deployment. The DGX Spark, by contrast, is meant to be a permanent product line.

Spec comparison

| Spec | DGX Spark (GB10) | Jetson AGX Thor (T5000) |

|---|---|---|

| AI Compute (FP4) | 1,000 TFLOPS (1 PFLOP) | 2,070 TFLOPS |

| AI Compute (FP8) | ~500 TOPS | 1,035 TOPS |

| CPU | 20-core Grace (10×X925 + 10×A725) | 12-core Arm Neoverse V2 |

| GPU Architecture | Blackwell SM 12.1 (~48 SMs, 6,144 CUDA cores) | Blackwell SM 11.0 (20 SMs, tcgen05+tmem) |

| Memory | 128 GB unified LPDDR5x | 128 GB unified LPDDR5x |

| Memory Bandwidth | ~273 GB/s | 276 GB/s |

| Storage | Up to 4 TB NVMe | 1 TB NVMe |

| USB Expansion | 10 Gbps | 5 Gbps |

| High-Speed Networking | ConnectX-7 (multi-node) | 100 GbE (multi-unit cluster) |

| MIG Support | No | Yes (up to 7 partitions) |

| Vision Accelerators | No | PVA Gen 3, Optical Flow Engine |

| Operating System | Ubuntu 22.04 + NVIDIA AI stack | JetPack (Ubuntu 24.04 LTS base) |

| Form Factor | 150×150×50.5mm (tiny cube) | Developer kit with full I/O board |

| Max Param Models | ~200B (single); ~405B (2× linked) | ~200B |

| Target Use Case | AI development, LLM inference, fine-tuning | Robotics, autonomous systems, physical AI |

| Availability | Ongoing (permanent product) | Limited run developer kit |

What do the benchmarks actually say?

This is where things get counterintuitive. The Jetson AGX Thor has more than double the peak FP4 TFLOPS on paper. But in real-world LLM inference benchmarks using llama.cpp, the DGX Spark consistently wins.

According to benchmark data published by JetsonHacks in October 2025 using llama.cpp build b6767, the DGX Spark delivers roughly 1.36× higher token-generation throughput than the Jetson Thor across single-batch tests. On prefill throughput under concurrent loads, the gap widens to about 2× in Spark's favor. Across batch sizes and contexts, Spark sustains around 1.37× higher decode throughput.

How does a machine with half the rated TFLOPS win at LLM tasks? The answer lies in the architecture differences. The GB10 chip has three times the CUDA core count (roughly 48 SMs vs 20 SMs) and a significantly higher GPU clock ceiling. The Thor's SM 11.0 architecture with its newer tcgen05+tmem tensor core instructions is optimized for structured, batched workloads — the kind you'd find in a production robot's AI pipeline, not in single-stream LLM generation.

The moral: raw TFLOPS numbers don't tell you what a machine is good at. The Thor's architecture is purpose-tuned for the workloads that matter in physical AI — and for those workloads, its specialized hardware blocks (PVA, optical flow accelerator, MIG partitioning) are advantages the DGX Spark simply doesn't have.

The software ecosystem matters enormously

Hardware specs aside, the software story might be the most important differentiator for most buyers.

The DGX Spark runs a standard Ubuntu environment with NVIDIA's full AI stack pre-loaded. CUDA, cuDNN, NIM microservices, NeMo, TensorRT-LLM — everything works the way you'd expect from an NVIDIA cloud instance, just running locally. If you've ever spun up an NVIDIA GPU instance on AWS or Azure, you'll feel right at home. The path from local development to cloud deployment is seamless by design.

The Jetson AGX Thor runs JetPack. This is NVIDIA's robotics-oriented platform, built on Ubuntu 24.04 but augmented with the Isaac robotics stack, real-time OS capabilities, and hardware drivers for the T5000's specialized accelerators. It's not a traditional developer environment — it's an embedded systems platform. You can technically run LLMs on it, but you'd be ignoring most of what makes the hardware special.

This matters practically. If your team works with PyTorch, Hugging Face, LangChain, or any standard ML tooling, the DGX Spark plugs straight in. If you're working with ROS 2, Isaac Sim, or vision-language-action models for robot control, the Jetson Thor's ecosystem is purpose-built for you.

While @nvidia Jetson Thor powers humanoid robots & autonomous machines, the same GPU-accelerated pipeline applies to any computer vision task. In this hands-on tutorial, we'll train a dental AI model — the complete workflow for any edge AI project. https://t.co/ysM4WjEEZV

— Ajeet Singh Raina (@ajeetsraina) January 23, 2026

So who should buy what?

Buy the DGX Spark if you...

- Build, fine-tune, or debug LLMs and generative AI models

- Need to run 70B–200B parameter models offline

- Work with sensitive data that can't leave your premises (healthcare, finance, legal)

- Want a desk-side AI development environment that mirrors cloud deployment

- Are a researcher or AI startup that wants production-grade hardware at a non-data-center price

- Need deep compatibility with the PyTorch/CUDA ecosystem

Buy the Jetson AGX Thor if you...

- Are building robots, autonomous vehicles, or edge AI systems

- Need real-time multi-sensor fusion (cameras, LiDAR, radar)

- Want to develop on the exact module you'll deploy in production hardware

- Work with Isaac Sim, ROS 2, or NVIDIA's physical AI toolchain

- Need MIG to run multiple AI models simultaneously with hard isolation

- Are an embedded systems engineer moving into AI

💡 For larger organizations: NVIDIA explicitly frames these as complementary tools, not competitors. The recommended workflow is to develop and fine-tune models on the DGX Spark, simulate and test in Omniverse, then deploy to Jetson Thor for real-world operation. If you're building a full physical AI pipeline, you might want both.

A few things worth knowing before you buy

The DGX Spark just got more expensive

The Founders Edition launched at $3,999 in October 2025. In February 2026, NVIDIA raised the price to $4,699 — an 18% increase — citing global memory supply constraints. OEM variants from Acer, ASUS, Dell, Gigabyte, HP, Lenovo, and MSI are available and may be priced differently, but check carefully as partner pricing varies. The EU price has also risen to €4,800.

The Jetson Thor developer kit is finite

NVIDIA has said this developer kit is a limited production run. When stock runs out, the expectation is that buyers will source the T5000 module directly for production deployment. If you want the full developer kit experience (complete board, all the I/O, easy setup), buy sooner rather than later.

The DGX Spark sold out in hours on launch day

When it launched on October 15, 2025, NVIDIA's own online store was showing a "sold out" message before most of the US woke up. Supply has improved since, but demand is clearly there. Micro Center remains one of the more reliable retail sources in the US.

Check the Docker integration if you're a container shop

Both machines support containerized AI workloads, but the DGX Spark's Ubuntu + CUDA environment makes Docker workflows considerably more straightforward. If your team deploys AI via containers (NIM microservices, Ollama, etc.), the Spark will feel more natural out of the box.

The bottom line

The confusion around these two machines is mostly NVIDIA's fault. They've priced them similarly, called them both compact Blackwell AI platforms, and announced them in close succession. But underneath the similar packaging, they're solving genuinely different problems.

If you're a developer, researcher, or AI team that wants to run and fine-tune large language models locally — with full CUDA compatibility, a polished software environment, and a clear path to cloud deployment — the DGX Spark is your machine. It's the best local AI development platform on the market right now.

If you're building a robot, an autonomous system, or any physical AI application that needs real-time sensor processing, multi-model GPU partitioning, and hardware designed to run in the real world — the Jetson AGX Thor is what you want. Nothing else in this price range comes close for that use case.

Neither machine is a mistake. The mistake is buying the wrong one.

Quick pick → AI development

NVIDIA DGX Spark — your desk-side LLM lab

Best for developers, researchers, and enterprise teams working with large language models, generative AI, and fine-tuning. The better LLM inference throughput in practice (despite lower paper specs) and seamless CUDA ecosystem make it the clear choice for software-side AI work.

Quick pick → physical AI / robotics

NVIDIA Jetson AGX Thor — the robot's brain

Best for robotics engineers, autonomous system developers, and physical AI teams. The specialized hardware accelerators, MIG support, JetPack ecosystem, and production-identical module make it the only choice if you're building things that move and perceive.