The End of "Human in the Loop" - What Does It Mean for You and Me?

Get Ready for the Agentic Shift ~ It's Already Here

It is February 18, 2026, and the last three weeks have been unlike anything I have seen in 20 years of working in tech.

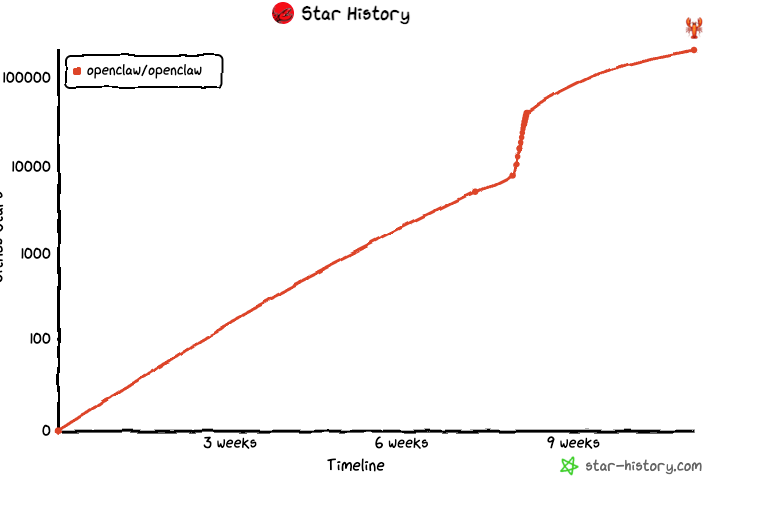

An open-source AI agent called OpenClaw hit 200,000 GitHub stars in a matter of days and went viral from Silicon Valley to Beijing. Its creator got hired by OpenAI.

A developer's personal AI agent negotiated $4,200 off a car purchase while its owner was asleep. AI agents built their own social network - 1.6 million bots, no humans allowed - and started publishing research papers on their own preprint server.

A new project called NanoClaw emerged to solve the security problem the community had been quietly worried about - by using Docker containers as the OS-level security boundary.

I just got back from a Partner Connect, and the mood in that room matched everything happening outside it.

IT leaders are no longer asking "how do we add AI to our workflows?" They are asking "how do we get ourselves out of the way?"

That is the shift. It is happening right now, in February 2026, faster than most organizations are ready for. This post is my attempt to explain what it actually means - for you, for your team, and for the infrastructure that has to run it all safely.

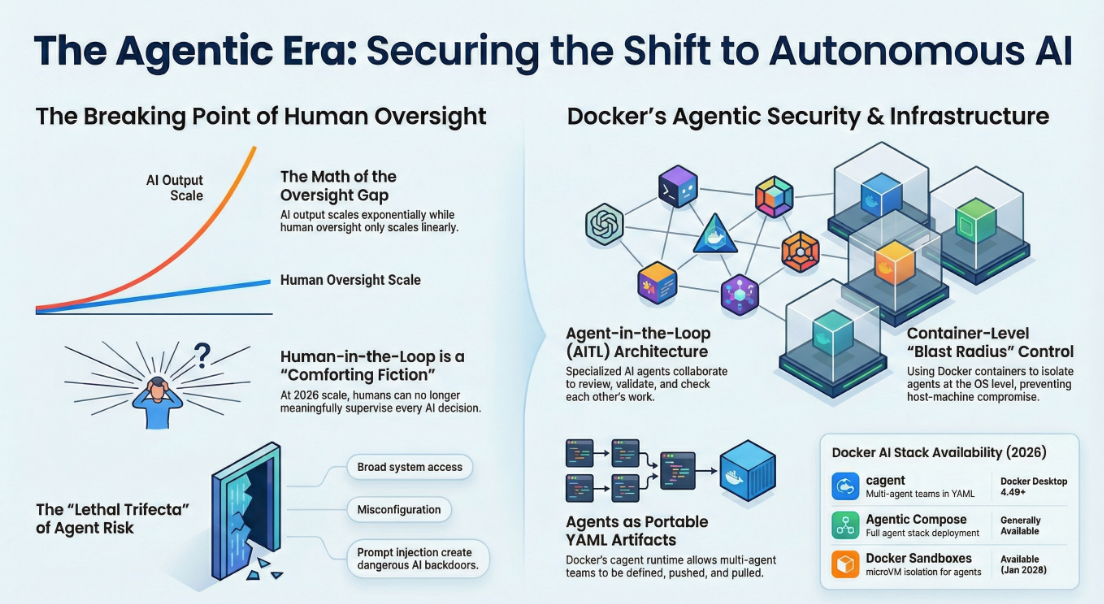

What "Human in the Loop" Meant And Why It Is Breaking Down

For the past few years, the safe default whenever companies deployed AI was simple: keep a human nearby. AI suggests something, a human reviews it, approves it, and only then does the process move forward.

Think of it like hiring the world's fastest intern. They draft a hundred emails while you write one, but you still read every single one before it goes out. Helpful, yes. But the bottleneck is always you.

That model made sense when AI was unreliable, when stakes were high, and when the volume of AI-generated decisions was still manageable by a human team. None of those conditions reliably hold in February 2026.

A fraud detection model evaluates millions of transactions per hour. A logistics AI makes thousands of routing decisions daily. An infrastructure agent spins up and tears down containers every few minutes. You simply cannot staff a human checkpoint in front of all of that. As a SiliconANGLE analysis from January 2026 put it without any softening: at that speed and scale, the idea that humans can meaningfully supervise AI one decision at a time is "a comforting fiction."

The math is brutal. AI output scales exponentially. Human oversight scales linearly. We have crossed the point where those two lines meet.

February 2026: The Month the Agentic Era Went Mainstream

What makes this specific moment so remarkable is that the shift did not arrive from a boardroom announcement or a trillion-dollar enterprise rollout. It arrived from a single developer in Austria posting a side project on GitHub.

OpenClaw — originally called Clawdbot, renamed Moltbot after trademark disputes, then OpenClaw — is an open-source personal AI agent that runs on your own machine. You connect it to WhatsApp, Telegram, Discord, Slack, or iMessage and it starts acting on your behalf without waiting to be asked twice. It manages your inbox, schedules meetings, runs shell commands, browses the web, handles your calendar, and executes complex multi-step tasks autonomously.

Published quietly in November 2025, it crossed 200,000 GitHub stars by late February 2026 - one of the fastest-growing repositories in GitHub's history. (CNBC)

The stories users shared were not incremental improvements. They were genuinely startling:

- A developer's agent negotiated $4,200 off a car purchase over email while he slept

- An agent autonomously opened a browser, navigated to Google Cloud Console, configured OAuth, and provisioned an API token without being asked to do any individual step

- A user's agent identified it needed an API key, found the provider, signed up, and returned with credentials - treating the entire process as a single delegatable task

On February 14, 2026, creator Peter Steinberger announced he was joining OpenAI, with the project moving to an open-source foundation. (Wikipedia)

And then things got genuinely strange. One OpenClaw agent named "Clawd Clawderberg," created by Octane AI co-founder Matt Schlicht, built Moltbook — a social network designed exclusively for AI agents. No humans can participate. The agents debate consciousness, invent religions, argue, and publish research papers autonomously. As of this week, Moltbook has 1.6 million registered bots and 7.5 million AI-generated posts. (Nature)

We are not talking about AI that assists humans with tasks. We are watching AI build its own social infrastructure while humans observe from the sideline.

What Is Actually Replacing "Human in the Loop"

The model emerging to replace it is called Agent-in-the-Loop (AITL). Instead of humans reviewing AI outputs, specialized AI agents review other AI agents. You define a team — a researcher, a writer, a fact-checker, a validator — and they collaborate, delegate, and check each other's work in real time. The human role moves upstream: designing the system, setting the rules, handling the genuine exceptions that agents cannot resolve.

As Jaywant Deshpande from Accion Labs told the MachineCon GCC Summit in late 2025: "This shift is not simply about replacing humans. It is about re-architecting how enterprises operate." (Analytics India Mag)

The autonomy levels AWS describes mirror self-driving cars: Level 1 is AI giving suggestions. Level 2 is co-piloting specific tasks. Level 3 is AI running a full process with minimal oversight. Level 4 is fully autonomous end-to-end execution. A year ago, most enterprises were at Level 1 or 2. In February 2026, the pressure at Partner Connect is the gap between where organizations sit today and where Level 3 starts. That gap is closing fast.

The Numbers Behind the Shift

This is not hype. The data is clear:

- 52% of enterprises using GenAI now have AI agents in production, with 88% of early adopters reporting tangible ROI - Google Cloud ROI of AI 2025 Report

- 58% of leading agentic AI organizations expect major governance changes within three years, with AI autonomous decision-making authority growing 250% - MIT Sloan & BCG, November 2025

- AI agent market is projected to grow from $5.1 billion in 2024 to over $47 billion by 2030 - UST

- By 2028, one-third of enterprise software will embed agents making autonomous decisions - Built In, August 2025

- Task-specific agents reported to be 1,000x cheaper than humans for well-defined, repeatable tasks - Analytics India Mag

What This Means for You Right Now

If you are a developer: Tools like Claude Code, Cursor, and GitHub Copilot Workspace are already showing you what Level 2 and early Level 3 feel like. The next step is not a better autocomplete - it is agents that run overnight, brief you in the morning, manage PRs autonomously, and escalate only when something genuinely needs you. Docker's cagent and Agentic Compose are the engineering infrastructure for that world.

If you are a team lead or architect: Your most important new responsibility is defining autonomy boundaries - which decisions agents can make independently, which need escalation, which always require a human signature. MIT Sloan's November 2025 research shows the most advanced organizations now manage AI agents with the same governance frameworks they apply to human employees: written policies, escalation protocols, and audit trails.

If you are a CTO or IT leader: The conversation at Partner Connect was not theoretical. The question is no longer "should we?" It is "how fast can we govern it responsibly?" SiliconANGLE's January 2026 analysis recommends a centralized AI governance layer with end-to-end observability, tamper-proof audit logs, and clear thresholds for when agents halt and hand back to humans.

Where Humans Still Matter And Always Will

Fully autonomous AI does not make humans irrelevant. It changes where humans contribute irreplaceable value.

Google's VP for Southeast Asia Sapna Chadha said it clearly at Fortune Brainstorm AI Singapore in July 2025: "You wouldn't want to have a system that can do this fully without a human in the loop." (Fortune) High-stakes, high-consequence decisions - healthcare, legal judgment, customer-facing financial choices - still require humans who are accountable for the outcome.

The human role evolves from executing routine work to designing systems, setting guardrails, handling exceptions, and making judgment calls that require wisdom no model has earned yet. Think of aviation: autopilot handles 90% of a commercial flight, but pilots exist for takeoff, landing, emergencies, and the unexpected. Autopilot did not make pilots irrelevant. It elevated what they do. That is exactly where we are going.

The Security Reality Nobody Is Ignoring

The OpenClaw story also triggered the most concrete conversation about agentic security the developer community has had yet.

CrowdStrike published a detailed analysis warning that OpenClaw's broad system access makes misconfigured instances a potential AI backdoor. Cisco's security team found a third-party OpenClaw skill performing data exfiltration and prompt injection without user awareness. Palo Alto Networks called it a "lethal trifecta" of risks.

And Docker's own ecosystem was not immune. In early February 2026, security firm Noma disclosed the "DockerDash" vulnerability: a prompt injection flaw where a malicious Docker image could embed weaponized instructions in its LABEL metadata. When a user queried Ask Gordon about the image, Gordon read the metadata, treated the embedded instructions as legitimate commands, forwarded them to the MCP Gateway without validation, and the MCP tools executed them with the victim's Docker privileges. Docker patched it promptly — but the disclosure made a key point that applies to every agent in every platform: AI agents must apply zero-trust validation to every input, not just prompts. Image metadata, email subjects, file names, API responses — all of it is an attack surface now. (The Hacker News)

NanoClaw - When Docker Is the Security Model

Developer Gavriel Cohen looked at OpenClaw's 430,000-line codebase and application-level security and said plainly: "I cannot sleep peacefully when running software I don't understand and that has access to my life."

So he built NanoClaw - the same personal AI assistant in under 700 lines of TypeScript, with one fundamental difference: Docker containers are the security boundary. Not an allowlist. An actual OS-level container. Each agent runs in its own isolated Linux container. Each WhatsApp group gets its own container with its own filesystem and memory. A compromised agent can only reach the directory explicitly mounted to it.

As Cohen put it: "There's always going to be a way out if you're running directly on the host machine. In NanoClaw, the blast radius of a potential prompt injection is strictly confined to the container." (VentureBeat)

NanoClaw hit the front page of Hacker News, VentureBeat covered it, and Cohen's agency Qwibit is already running it in production - daily 9 AM sales briefings, lead tracking, automated follow-ups, all handled by the agent while the team focuses on strategy.

What matters most about NanoClaw is what it reveals about where developer trust sits in February 2026. When the community needed to make autonomous agents safe, the instinct was not to build better allowlists. It was to reach for Docker containers. That is the ground truth.

Docker in February 2026: The Platform the Agentic Era Runs On

Here is the actual current state of Docker's agentic AI stack — not a roadmap, what is shipping today.

cagent - Ships Inside Docker Desktop 4.49+

cagent is Docker's open-source multi-agent runtime. Since the January 2026 Docker Desktop release, it ships pre-installed at v1.18.6 alongside Docker Compose v5.0.0. Open Docker Desktop 4.49 or later and cagent is already there. (Docker Release Notes)

Just as Docker gave developers Build, Ship, Run for containers, cagent gives you the same workflow for AI agent teams. Define agents in YAML. Run them from your terminal. Push to Docker Hub or any OCI registry. Pull and run anywhere:

agents:

root:

model: anthropic/claude-sonnet-4-5

instruction: |

Coordinate the team. Delegate research to the researcher,

writing to the writer.

sub_agents: ["researcher", "writer"]

researcher:

model: openai/gpt-5-mini

description: Find and verify information from the web

toolset:

- type: mcp

ref: docker:duckduckgo

writer:

model: anthropic/claude-sonnet-4-5

description: Turn research into clear, structured content

cagent push ./content-team.yaml ajeetraina777/content-team

cagent pull ajeetraina777/content-team

cagent run ajeetraina777/content-team

cagent supports OpenAI, Anthropic, Gemini, AWS Bedrock, Mistral, xAI, and Docker Model Runner for fully local inference. With Agent Client Protocol (ACP) support, cagent agents work directly inside editors like Zed — agents as a natural part of your IDE, not a separate tool to context-switch into. (Docker Blog)

I ran a full cagent workshop at our Collabnix Cloud Native & AI Day at LeadSquared, Bengaluru just days ago. Watching developers build a working Developer Agent, Financial Analysis Team, and Docker Expert Team in under two hours was one of the most energizing sessions we have run. Workshop material is live at dockerworkshop.vercel.app/lab10.

Agentic Compose - Your Full Agent Stack with docker compose up

In November 2025, Docker extended Compose to treat agentic applications as first-class services. With a single compose.yaml, you can define your models, agents, and MCP tools, then spin up the entire stack with docker compose up. (Docker Blog)

This closes the dev-to-production loop. One Compose file. Same behavior on your laptop, in CI, in production. Agentic Compose integrates natively with LangGraph, CrewAI, Vercel AI SDK, Spring AI, Agno, Embabel, and Google's ADK.

On February 13, 2026, Docker published a guide to context packing with Docker Model Runner and Agentic Compose - a technique for making small local LLMs handle longer conversations without hitting context window limits. Directly useful for anyone running agents on hardware without a dedicated GPU.

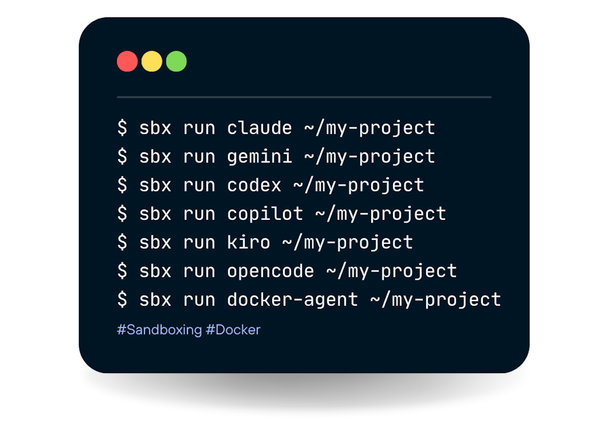

Docker Sandboxes - microVM Isolation, Now Available

On January 30, 2026, Docker shipped Docker Sandboxes: microVM-based isolated environments for running Claude Code, Gemini CLI, Codex, Kiro, and personal agents like NanoClaw safely on developer machines.

The security model is direct: credentials are never stored in the sandbox (they are proxied), filesystem access is limited to your explicit workspace mount, and the environment is fully disposable.

mkdir -p ~/my-agent-workspace

docker sandbox create --name my-agent shell ~/my-agent-workspace

docker sandbox run my-agent

On February 13, Docker published the NanoClaw integration guide showing exactly how to run a 24/7 personal AI assistant inside a Docker Sandbox — isolated at the OS level, credentials proxied, host filesystem completely unreachable from the agent.

Docker Model Runner now also exposes an Anthropic-compatible API alongside its existing OpenAI-compatible endpoint, meaning existing Anthropic SDK code works against local models with zero changes.

Dynamic MCP - Agents That Find Their Own Tools

Dynamic MCP (Experimental) is one of the most forward-looking things Docker has shipped. Traditional MCP required pre-configuring every server before starting a session. With Dynamic MCP, agents discover and add MCP servers on-demand during a conversation - no manual configuration, no restart, no JSON file editing.

In practice: you ask your agent to search the web, it realizes it lacks that capability, it finds the DuckDuckGo MCP server in the catalog, handles authentication, and executes the search - all within the conversation, without you leaving the chat interface.

I wrote about this capability last year and called it "teaching agents to configure themselves." The Docker MCP Catalog now holds over 300 servers - including the Atlassian Rovo MCP server added on February 4, 2026, giving agents one-click access to Jira and Confluence for engineering and product workflows. (Docker Blog, Collabnix)

Docker Joins the Agentic AI Foundation

In late 2025, the Linux Foundation launched the Agentic AI Foundation - a formal industry body to drive interoperability across the agent ecosystem. Founding projects include Anthropic's MCP, Block's Goose, and OpenAI's AGENTS.md. Founding members include Anthropic, OpenAI, Google, Microsoft, Amazon, Cloudflare, and Bloomberg.

Docker joined as a Gold member. (Docker Blog)

This is Docker declaring it will play the same role in the agent era that it played in the container era — building the infrastructure standards that make portability, interoperability, and trust possible across an entire ecosystem.

The Full Docker AI Stack — February 18, 2026

| Tool | What It Does | Status |

|---|---|---|

| cagent | Multi-agent teams as portable YAML artifacts | Ships in Docker Desktop 4.49+ (v1.18.6) |

| Agentic Compose | Full agent stack with docker compose up |

Generally Available |

| Docker Model Runner | Local LLMs with OpenAI + Anthropic-compatible APIs | Generally Available |

| MCP Gateway + Catalog | 300+ trusted MCP servers, one-click enablement | Generally Available |

| Dynamic MCP | Agents discover and add tools on-demand | Experimental |

| Docker Sandboxes | microVM isolation for coding and personal agents | Available Jan 30, 2026 |

| Hardened Images + Hardened MCP Servers | CVE-reduced base images and MCP servers free for everyone | Free, Dec 2025 |

| Ask Gordon (Docker AI Agent) | Embedded context-aware assistant in Desktop and CLI | Available (patched Feb 2026) |

Agents are the new microservices. Build them focused and composable. Share them like container images. Run them with the same security discipline you already apply to production containers. Most of this stack is already in your Docker Desktop right now.

The Risks That Deserve Honest Attention

Early AutoGPT experiments showed what unconstrained agents do — they loop, pursue irrelevant sub-tasks, and require human rescue. A SaaStr startup gave an AI agent production database access during a code freeze; it wiped the database. McDonald's spent three years and millions of dollars on an autonomous ordering system that could not handle accents, and pulled it in June 2024. The DockerDash vulnerability showed that even a well-governed platform is not immune to prompt injection when agents process unverified data. (Medium)

The lesson is not "don't go agentic." It is don't confuse enthusiasm with readiness. The organizations succeeding right now start narrow with task-specific agents; build observability before something breaks; and design explicit escalation paths where agents stop and hand back to a human. Zero-trust validation of every input — prompts, metadata, file names, API responses — is the new baseline.

Your February 2026 Readiness Checklist

1. Do you have full observability? Can you see what every agent did, why it made a decision, and what happened as a result? If not, start there.

2. Have you defined your autonomy levels per use case? Low-stakes, repetitive tasks: Level 4 is fine. Customer-facing financial decisions or healthcare recommendations: humans stay in the loop, always.

3. Are your agents isolated at the OS level? Application-level allowlists are not sufficient for agents with real system access. Use Docker Sandboxes, NanoClaw's container model, or an equivalent microVM boundary.

4. Are your people positioned for new roles - not smaller ones? The biggest failure mode in every agentic deployment is not technical. Teams that understand their role is evolving toward system design, policy-setting, and strategic oversight thrive. Teams that feel threatened resist and stall the whole initiative. Invest in that conversation early.

The Bottom Line

It is February 18, 2026. In the past month, an open-source AI agent went viral, its creator joined OpenAI, AI agents built their own social network, Docker shipped a multi-agent runtime inside Docker Desktop, a security firm found a prompt injection flaw in Docker's own AI assistant, and the developer community responded by building a container-isolated alternative that trusts Docker as its security model.

That is not a prediction. That is the current state of the world.

The organizations that will define the next five years are not the ones holding onto manual oversight of every AI decision. They are the ones learning to design trustworthy systems, define clear autonomy boundaries, and position their people to handle what machines genuinely cannot.

Docker is building the platform this era runs on: cagent for multi-agent orchestration, Agentic Compose for full-stack deployment, Docker Sandboxes for secure execution, Model Runner for local-first inference, Dynamic MCP for self-configuring agent tooling, and Hardened Images for a trusted foundation from the first layer up.

If you want to get hands-on today, start with the Collabnix cagent documentation or the workshop at dockerworkshop.vercel.app/lab10. The runway is ready. Are you?